When Your Best Customer Can’t Click

by Mardoqueo Arteaga

AI;DR (or: what this is actually about): Between November 2024 and November 2025, Google organic search referrals to publishers fell 33% globally and 38% in the United States. Where did the traffic go? ChatGPT accounts for 0.02% of publisher referral traffic. Perplexity: 0.002%. It didn't migrate to AI platforms, but rather disappeared from the measurable web entirely. As generative AI answers replace search results pages, decisions are being made inside models that leave no fingerprint on anyone's analytics dashboard. McKinsey finds that only 16% of companies today systematically track their visibility in AI-generated answers. This is not primarily a technology problem. It is a textbook case of information asymmetry, and markets do not resolve those on their own.

Advertising attribution has always worked on an honor system dressed up in dashboards.

When a user clicked a paid search result and then bought something, the industry agreed to call that a conversion. What actually happened was murkier: the same user had probably seen a display ad two weeks earlier, read a comparison article, gotten a recommendation from a colleague, and searched again three times before converting. The click got credited because it was there as an observable and attributable in a way that perhaps their colleague's recommendation or the late-night Reddit thread were not. Last-touch attribution wasn't a theory of how buying decisions happen but rather of what could be measured. We could get fancy with multi-touch, but bear with me.

This mismatch between the observable signal and the underlying economic action is what Grossman and Stiglitz (1980) formalized: markets shaped by information asymmetry don't allocate resources we might assume. Capital flows toward what can be counted, such that channels that genuinely drive outcomes but remain unobserved can get systematically underinvested. Which is exactly why word-of-mouth, editorial coverage, and earned media were always underfunded relative to their actual influence, and why the industry knew this and called those channels "unmeasurable" rather than confronting the implication.

The attribution gap has been the dirty secret of digital marketing for two decades. What's changed now is its magnitude.

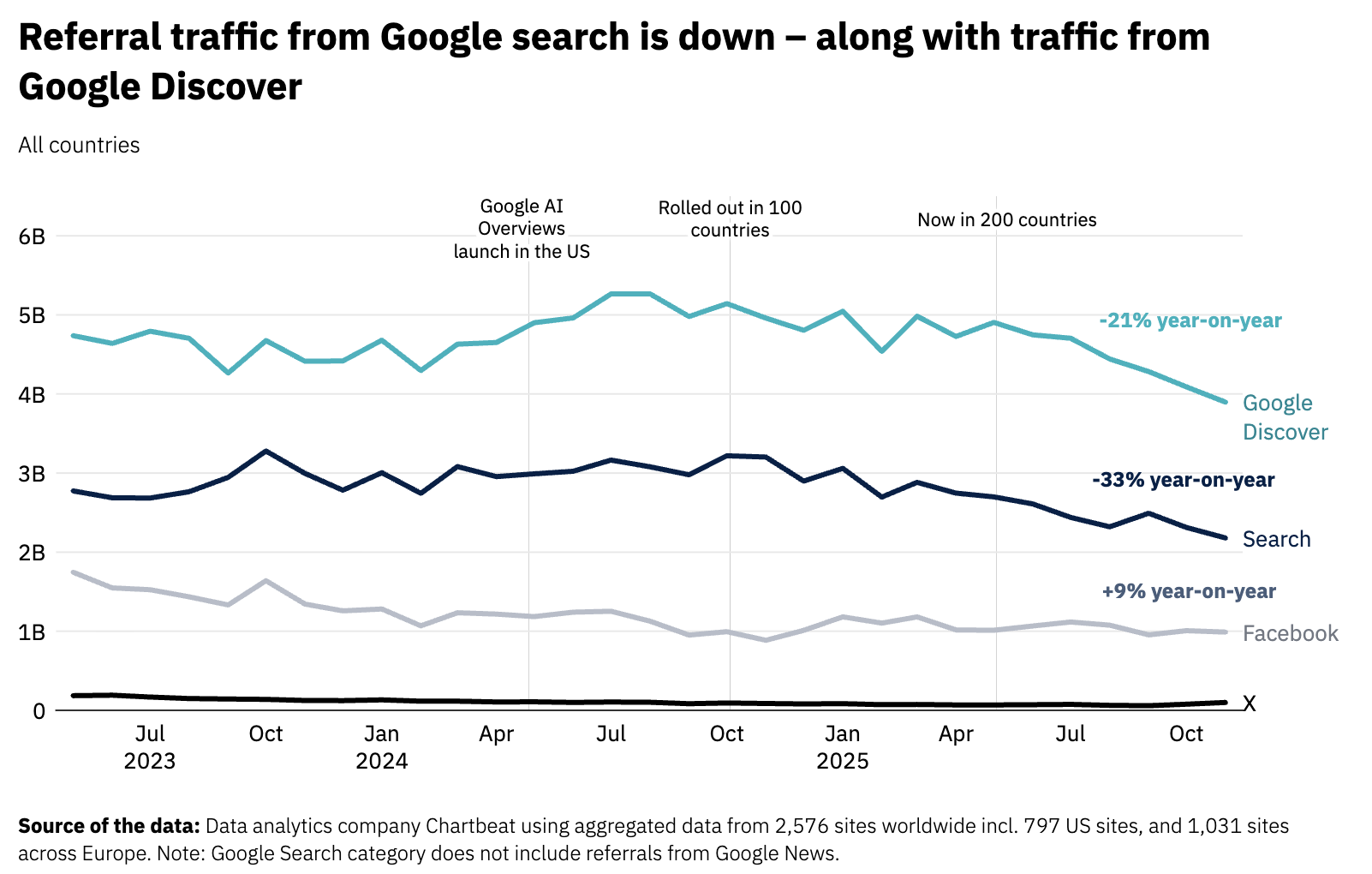

The Line Was Already Going Up

In January 2026, the Reuters Institute for the Study of Journalism at Oxford published its annual *Journalism, Media, and Technology Trends and Predictions* report. I took note of a figure that should be making more noise in marketing circles: Google organic search referrals to publishers fell 33% globally between November 2024 and November 2025, and 38% in the United States, measured across more than 2,500 publisher websites using Chartbeat data (Newman, Reuters Institute 2026). Since May 2023, when Google began rolling out AI-generated summaries at the top of its results pages, cumulative external referrals to those same publisher sites are down 24%.

So where did the traffic go? The answer, and this is the part that should give marketers pause, is that it mostly went nowhere measurable. ChatGPT currently accounts for approximately 0.02% of total referral traffic to publishers. Perplexity accounts for 0.002% (Newman, Reuters Institute 2026). These represent a genuine order-of-magnitude gap between how much traffic is leaving Google's ecosystem and how much is showing up anywhere else. The traffic is not migrating; it is disappearing from the observable internet.

What this means, structurally, is that the decisions that used to generate a click are now being resolved before a click is ever needed. An AI assistant synthesizes sources, evaluates options, and delivers a recommendation. The user receives that recommendation without ever visiting the sites that informed it. The moment of influence–which used to be an impression, or at minimum a page view–has moved into a layer of the information stack that publisher-side analytics cannot see and that most brand-side analytics have not yet been built to observe.

There is one piece of data from this period that cuts against a fully pessimistic read. Adobe Analytics, tracking more than a trillion visits to US retail sites, found that when AI-referred traffic does materialize as a click through to a retailer, those visitors behave differently from organic search traffic: bounce rates 37% lower, conversion rates only 22% below non-AI traffic, and meaningfully longer time on site (Adobe Analytics, Q2 2025). The sessions that survive the AI filtering process are high-intent. But the volume is still small, and the implication is uncomfortable because, to me, this is saying that the top of the funnel is being replaced by a process that happens upstream, invisibly, and what surfaces into the measurable web is a narrower, pre-qualified stream. Better signal, far less of it, and no way to influence the process that produces it.

In 2024, Gartner projected that traditional search engine volume will have fallen by 25% by this year (2026). Media executives surveyed in the Reuters Institute report expect their search referrals to fall by 43% by 2029. McKinsey finds that 44% of AI-powered search users already call it their primary source for purchase decisions, outpacing traditional search at 31% (McKinsey & Company, 2025). These are not forecasts about a distant future. The decline is already in the data.

A Measurement Vacuum Is Not Neutral

I want to be precise about what breaks when markets lose their signals, because I think the industry is describing this shift mostly in the language of strategy and tooling rather than in terms of the economic mechanism.

When a measurable signal exists, even an imperfect one, markets can price it. Advertisers bid on keywords because keyword conversion rates could be estimated, compared against alternatives, and updated over time. The signal was noisy, and, frankly, often misleading. But it was consistent and competitive, and that meant the distortions it produced were at least systematic rather than arbitrary. Everyone priced off the same flawed proxy.

When that signal disappears, or moves into a channel that most participants cannot observe, the situation looks less like a noisy market and more like what Akerlof (1970) described for used cars. This has nothing to do with quality (although I have read some great articles rallying against AI slop, which is very real), and all because buyers and sellers have lost access to the information that would let quality be priced. In the current context, it's the seller who loses information about their own market position: a brand cannot optimize for a channel it cannot measure, and the brands that develop early proxies for AI visibility, even crude and inconsistent ones, will appear to be winning the channel regardless of whether their product merits the recommendation. That's a structural advantage of measurement over quality, and it's where this starts to have real welfare implications.

McKinsey found that a brand's own web properties account for only 5 to 10 percent of the sources AI search systems actually reference when generating answers (McKinsey & Company, 2025). The remainder comes from third-party content, such as reviews, forums, press coverage, editorial mentions, which were the same channels that last-touch attribution consistently undervalued and underinvested in for twenty years. The irony is uncomfortable: the channels digital marketing treated as secondary are now the primary inputs that determine whether an AI system recommends you.

Only 16% of brands today systematically track their visibility in AI-generated answers (McKinsey & Company, 2025). The remaining 84% are operating without a signal in a channel that is already material and growing.

Takeaways

For marketers, the proxies that attribution modeling was built around are becoming less representative of the decisions you're actually trying to influence. That's not an argument for abandoning measurement but rather being creative in how we think about its future. Channels that were historically justified by their measurability should be re-examined against what they're actually delivering, because for the first time in a while, the cost of ignoring the unmeasured channels is concrete rather than theoretical.

For economists, this market is worth watching as an empirical case study in what happens when a core measurement infrastructure degrades faster than its replacement emerges. Attribution markets that lose their primary signal will persist on inertia, overweighting the remaining observable proxies as those proxies become less predictive. The transition period produces the kind of systematic misallocation that appears in retrospect as obvious waste, but that rational individual actors have no incentive to exit unilaterally. How quickly the market corrects depends heavily on whether the platforms that mediate AI discovery choose to develop transparent measurement standards. Their incentives to do so are, at best, mixed.

For policymakers, the disclosure question has not received enough attention. The FTC's 2002 guidance on search advertising assumed a results page where organic and sponsored content could be labeled distinctly. AI-generated answers don't have a natural disclosure surface. A synthesized paragraph does not accommodate a small "Ad" label. If AI systems develop commercial arrangements that influence which brands appear in their answers (and the economic pressure to do so is substantial), the existing regulatory vocabulary for paid placement will need to be rebuilt from different premises. The sponsored link was at least visible. What replaces it may not be.

Works Cited:

Akerlof, G. A. (1970). "The Market for 'Lemons': Quality Uncertainty and the Market Mechanism." *Quarterly Journal of Economics*, 84(3), 488–500.

Adobe Analytics. (2025). "Q2 2025 Insights: AI Referrals Surge Across Industries." Adobe Business Blog.

Gartner. (2024). "Gartner Predicts Search Engine Volume Will Drop 25% by 2026, Due to AI Chatbots and Other Virtual Agents." Gartner Newsroom.

Grossman, S. J., & Stiglitz, J. E. (1980). "On the Impossibility of Informationally Efficient Markets." *American Economic Review*, 70(3), 393–408.

McKinsey & Company. (2025). "New Front Door to the Internet: Winning in the Age of AI Search." McKinsey.com.

Newman, N. (2026). *Journalism, Media, and Technology Trends and Predictions 2026*. Reuters Institute for the Study of Journalism, University of Oxford.